Toph is where competitive programmers compete, practice, and grow. The platform hosts contests, curates thousands of algorithm and data structure problems, and brings together a global community of developers who take their craft seriously.

But running a platform like Toph isn’t just a software challenge — it’s a security and infrastructure challenge. Every submission is arbitrary code from an anonymous user. Every contest is a live event where thousands of people are watching a leaderboard refresh in real time. And any given Tuesday could become a spike day when a university decides to run a contest with 1,000+ participants.

To keep up, Toph needed an architecture that was secure by design, elastic under load, and fast enough to feel instant.

The Challenge

Three problems had to be solved — and solved well:

Secure code execution. User-submitted code runs on Toph’s servers. That means any submission could, in theory, attempt to break out of its environment, access other users’ data, or consume unbounded resources. A naive sandbox isn’t enough.

On-demand scaling. Contest traffic is unpredictable by nature. The system needed to handle sudden surges — without degrading the experience for participants mid-contest.

Real-time contest experience. Competitive programming lives and dies on immediacy. Participants need to see verdicts fast. Moderators need visibility into what’s happening across the board. Latency isn’t just annoying — it undermines the integrity of the contest.

The Solution

A Lightweight, Secure Sandbox

The team built a custom sandboxing tool using Linux Namespaces and Cgroups — the same kernel primitives that power container runtimes like Docker. This gave Toph tight, low-overhead isolation for every code submission: each piece of user code runs in its own confined environment, with strict limits on CPU, memory, and system access.

The result is a sandbox that’s both fast and secure — making the most of available server resources without cutting corners on safety.

A Scalable Back-End Architecture

Toph’s Go back-end was built following the Twelve-Factor App methodology, which treats each service as an independently deployable, stateless unit. This meant the system could scale individual components — the judge, the API, the contest runner — based on where demand was actually hitting, rather than scaling everything at once.

When a big contest goes live, Toph scales up exactly what it needs. When it’s over, it scales back down.

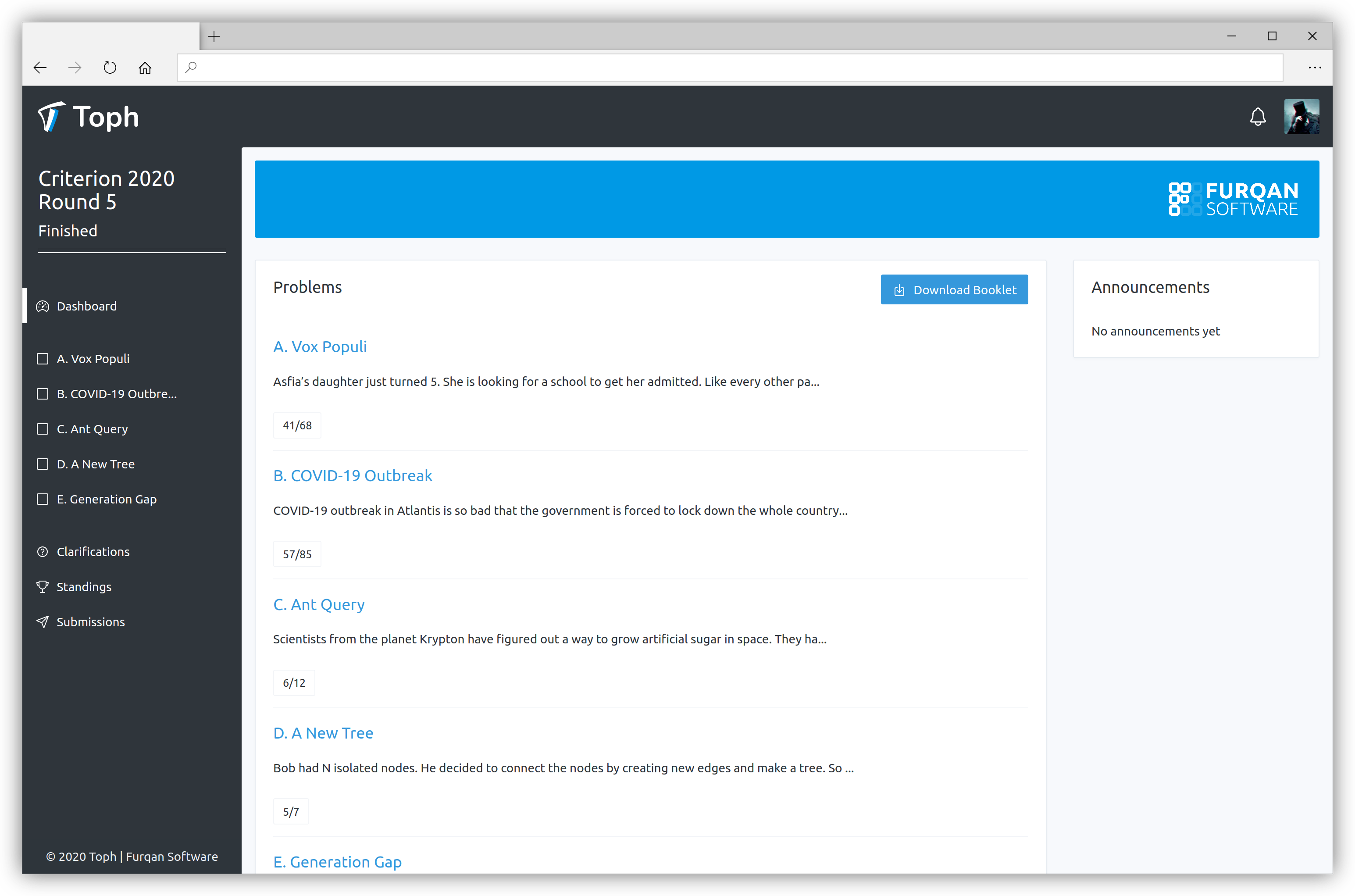

A Real-Time Contest Arena

The front-end team built Toph’s contest arena as a single-page app using Backbone.js, wired up to a PubSub architecture on the back end. Scores update live. Verdicts appear without a page reload. The experience holds up across different network conditions — which matters when participants are joining from around the world.

The Results

“Zero sandbox breaches across nearly 2 million submissions.”

Since launch, Toph has:

- Hosted hundreds of contests, including events with 1,000+ simultaneous participants

- Built a library of 2,100+ problems spanning varying difficulty levels, with 450+ problem tutorials

- Processed 1,928,000+ total submissions — with zero sandbox breaches

- Supported 49 programming languages across all contests and practice problems

- Delivered a contest moderation dashboard that makes running events genuinely easy

What It Means

Toph can now run a large-scale contest on short notice, absorb a traffic spike without scrambling, and hand every submission a verdict quickly and safely. The platform’s architecture doesn’t just support its current scale — it’s built to grow with it.